Google Webmaster Conference (2019)

Google finally released the presentations from the Webmaster Conference in 2019! There was so much great information that I wish I had been able to share when I left.

You get a much different understand of what Google actually does behind the scenes, and this has steered the direction and development of the Feast Plugin over the last 6 months.

They also give you an understanding of how insanely complex what they're doing is, and why they do everything by algorithm instead of manually.

There is some rich information in here.

I'll embed my takeaways along with the videos down here.

Jump to:

Deduplication

- Google tries very hard to understand which page on your site is the best match for the user's search intent

- Pages with similar content send conflicting signals

- Google tries to detect boilerplate (header, navigation menu, sidebar, footer) and exclude these from the page's content

- Content checksums provide a way for Google to easily determine which sections of a page have/haven't been updated during a recrawl, as well as identify duplicate content between pages (eg. static "reusable blocks" are all duplicate content, but the advanced_jump_to shortcode would not be)

- rel="canonical", sitemaps and internal linking provide hints, but this isn't as good as redirects, which are much stronger signals

- Their #1 concern (outside of hacking) is user experience. They want to send users to the best possible page for the query.

- It can be incredibly valuable to delete content that might be competing with others on your site and redirect that content to a single page to condense the signals to that page and boost it's ranking ability

tl;dr; here is that you shouldn't have categories that overlap with posts, or posts that overlap with other posts. At myculturedpalate.com we inherited 7 different "paleo brownie" recipes because the former owner had been told to create "similar content".

We unpublished 6 of those and redirected them to the one that was ranking the best, and it climbed up in the search results.

Additionally:

We use (simplified) URL length as a factor when picking a canonical URL. It doesn't mean the content will rank better, but if both pages have the same content on the site, then we might pick the shorter URL to show. Like with language, I wouldn't worry about this for ranking.

John Mueller, Google

Rendering

Rendering is what happens when the Googlebot loads your page, and this is where a crappy ad network and excessive DOM nodes can really screw you over.

- Googlebot uses Chrome to fetch and render your page (as of 2019) so it "sees" what you would see in the Chrome browser

- Googlebot limits how much it crawls your website at once, which means you want it to focus on high quality content - this is why it's so important to noindex and delete content following Casey's advice: https://www.semrush.com/webinars/work-smarter-not-harder-how-to-dominate-2019-with-reusable-content/

- Googlebot will limit itself to 50-60 resources per page, which means if you have too many plugins that load too many resources you can wind up with content that doesn't render. Limit your plugins!

- Google overcaches due to computing constraints, this means that updates you make might not be reflected for several days/weeks as it gets recrawled over time - this is why it takes so long for your content to get updated sometimes

- External comment systems are specifically called out here - these are not a good idea (for many other reasons as well) - use your own built-in WordPress comments

- Javascript is problematic - it is run, but it will be cut off if it runs too long or consumes too many resources

- Bad ad networks should be avoided as they absolutely destroy Googlebot content fetching and impact your ability to rank for content.

- This is why we say don't run an ad network that doesn't know what they're doing. Next time your (non-Mediavine) ad network tells you their javascript and pagespeed is fine, call them out on it! Then drop them like a sack of potatoes.

This presentation gives a good overview about why DOM nodes and excessive Javascript warnings in the Pagespeed Insights tool need to be paid attention to.

This is why Feast has been working on eliminating javascript from our themes (eg. the Modern Mobile Menu is all CSS, and the Advanced Jump To Links uses native browser functionality, not javascript)

Titles, snippets and results previews

- Google wants to showcase both depth on a topic and diversity of content and let the user choose which they want

- The same algorithm that decides if a page is relevant can also decide which part of the page is relevant. This is why we recommend using the Advanced Jump To Links with our Heading Guidelines to segment your content and offer multiple parts of your page to match as many queries as possible

- Galleries may be a super useful feature in the future, where you can tie recipe steps to process shots (we haven't seen this applied to recipe queries yet)

- Good site structure can contribute to additional site links in the search results, and we've seen "site links" being generated from the Advanced Jump To Links

- Facts may be algorithmically extracted from on-page tables

- Markup/schema takes priority over algorithmically-extracted data

- The concept of "dominant" elements is introduced here - lists, tables, and for recipes, maybe even the recipe card - and is loosely tied to position on page - we may want to try testing inserting recipe cards at the top of posts and seeing what affect this has on ranking and rich results

Relevance infuses everything - if you have unrelated content (such as personal stories and anecdotes) then your content is not as relevant overall, but Google knows how to surface the relevant parts of your content for visitors in the search results.

Stay on topic!

Google images: best practices for search

- Google is less sure about user intent for image search, so they offer high variety with rich snippets/content

- They're using recipe schema to incorporate the structured data into the Google Image Search results

- Along with descriptive file names (no DSCN-0004.jpg) and descriptive titles (but use alt text only for screen readers)

- Check out those thumbnails - they're either horizontal or square - this is why we released the Modern Thumbnails and now recommend setting your featured image to 1200x1200 square

- Use high quality images! That means large and crisp

- Text surrounding images provides context, relevance and increases quality signals

The two biggest failures we see in food blogging is that people aren't optimizing their image names, or using image captions.

Takeaway

Overall, there's a definite trend where, if Google isn't sure they know the user intent, they're starting to surface more variety of content.

- Google is not a single monolothic beast, but rather, dozens of quasi-independent units that sometimes, but not always, integrate with each other - this is why there's often a discrepancy between search results and Search Console, or recipe carousel vs. normal search engine listings vs. images

- They're not all-powerful and all-knowing, and the more you can spoon-feed them clear ranking signals and structured data, the more they can help you

This could be a big reason why traffic levels everywhere seem to have declined over the last year. Where once Google thought anything food related was a recipe, they're now offering restaurants in maps, order/delivery apps, FAQs and websites and more variety in general.

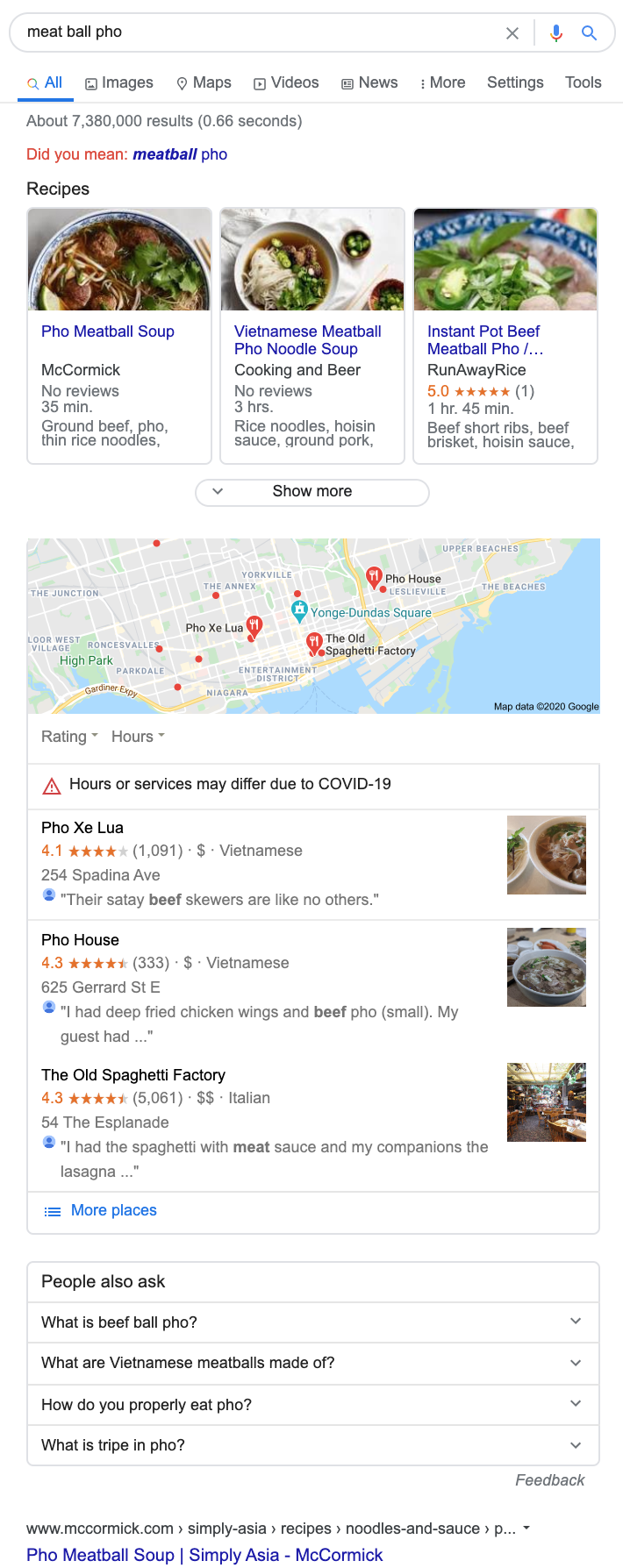

If we once had 10 recipe results for the keyword "meat ball pho", we now have much fewer, and the #1, #2 and #3 recipe results will get the lion's share of traffic because it's shared by local listings and FAQ:

Sidenote: The Old Spaghetti Factory does not serve pho.

More than ever, recipes are super competitive you need to step up your content game and:

- Use any and all structured data and tools available to you, such as the Advanced Jump To Links with good page headings

- Keep you site lean and optimized by reducing DOM nodes, limiting plugin usage and javascript (with the Modern Mobile Menu and Modern Homepage)

- Fill out your homepage and category pages with unique content

- Go back and optimize your existing content